🧠 Your Weekly AI Dose #5

Meta's Llama 3, GPT-4 Turbo with Vision API, Tiktok's AI Profile Pictures, CodeGemma, and Infini-Transformer

Hi friends 👋,

Welcome to this week of our newsletter!

IntelliGen is an AI open house hosted by AI industry builders and CMU alumni.

Each week, we bring to you AI insights, product, and research updates, to keep you informed on the latest and greatest in AI.

Let’s get to it.

🧠 In Today’s Newsletter:

📢 Community Announcements

Check out our recommended AI learning resources, which are getting constantly updated!

Check out upcoming events and fresh job openings in the AI space!

⚡️ AI News You Should Know

Meta’s Llama 3 Coming Next Week

Adobe Trained Firefly on Midjourney

TikTok Explores AI Ad Avatars

Google: All-in on Generative AI at Google Cloud Next

OpenAI Makes GPT-4 Turbo with Vision API Generally Available

💡 Research Updates

Rho-1: Not All Tokens Are What You Need

Infini-Attention

CodeGemma

LM-Guided Chain-of-Thought

⚡️ AI News You Should Know

1. Meta’s Llama 3 Coming Next Week

Meta confirms plans to start rolling out Llama 3 models 'very soon'

Meta’s Llama 3 is expected to be released as early as next week in a smaller version, with a full release planned for July. The model is anticipated to have a range of sizes, from 7 billion to over 100 billion parameters, and is expected to be open-source, allowing for easier integration and use by developers. Llama 3 is also expected to have multimodal capabilities, allowing it to understand both text and visual inputs. The model is expected to be less cautious than its predecessor, Llama 2, which faced criticism for excessive moderation controls and strict guardrails. Meta is investing heavily in advanced AI systems, including purchasing hundreds of thousands of H100 GPUs from Nvidia to train Llama 3 and other models.

2. Adobe Trained Firefly on Midjourney

Adobe’s ‘Ethical’ Firefly AI Was Trained on Midjourney Images

Adobe’s Firefly AI image generator, which was initially marketed as ethically trained using Adobe Stock images, was revealed to have been partially trained on AI-generated images from competitors like Midjourney. Adobe had emphasized the safety and exclusivity of Firefly’s training data, but it was later disclosed that the company utilized AI-generated content from these competitors to refine its model. This detail was not disclosed in Adobe’s presentations and public statements about Firefly, where the company emphasized the safety and exclusivity of its training data. The controversy highlights the complexities and ethical considerations in the development of AI technologies, especially regarding the sourcing and use of training data.

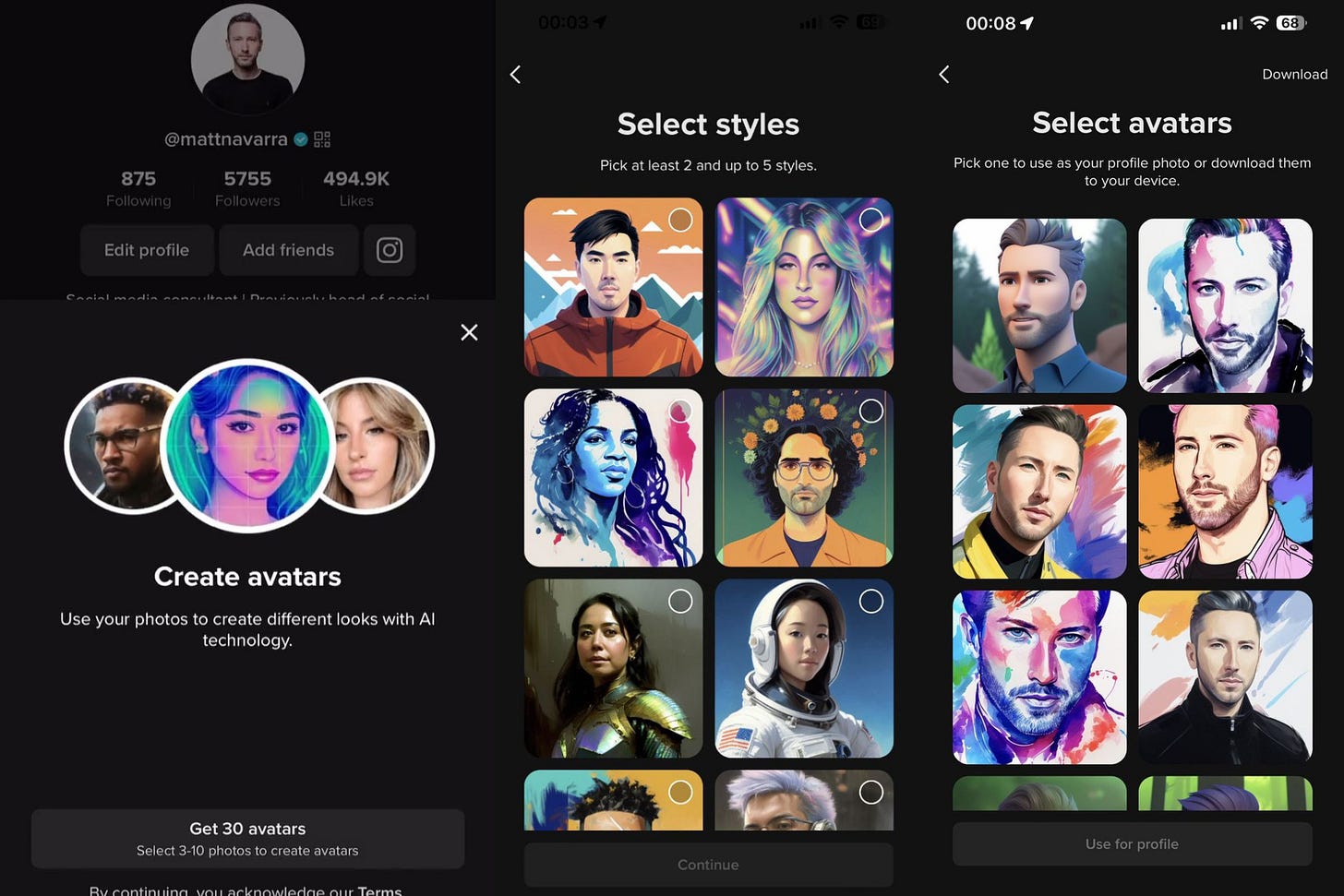

3. TikTok Explores AI Ad Avatars

Leak: TikTok is launching generative AI avatars, and here’s what they look like

TikTok is exploring the use of AI-generated digital avatars for content creation and advertising purposes, offering over 100 different avatars to choose from, varying in appearance, accents, and languages. This innovation is part of TikTok’s broader integration of AI tools and is seen as a cost-effective way for businesses and content creators to produce content. TikTok is also working on a feature that allows users to create AI-generated profile pictures. The use of AI avatars and profile pictures is part of TikTok’s commitment to adding value to the community and enriching the TikTok experience while navigating the challenges and implications these technologies bring to the platform’s ecosystem and its users’ privacy.

4. Google: All-in on Generative AI at Google Cloud Next

Google goes all in on generative AI at Google Cloud Next

Google showcased its strong focus on generative AI at the recent Google Cloud Next conference, with a range of new announcements and capabilities. The company emphasized the importance of its Gemini large language model in powering a variety of generative AI applications, and unveiled new tools and services to help customers build and deploy their own generative AI models. However, some companies may struggle with the implementation of these solutions due to challenges around data quality and preparation, highlighting the need for careful evaluation of infrastructure and readiness before fully embracing Google’s generative AI offerings.

5. OpenAI Makes GPT-4 Turbo with Vision API Generally Available

OpenAI makes GPT-4 Turbo with Vision API generally available

OpenAI has announced the general availability of its GPT-4 Turbo model with advanced vision capabilities through its API, allowing enterprises and developers to integrate these powerful language and vision features into their applications. The GPT-4 Turbo model was first unveiled at OpenAI’s developer conference in November 2023, building on the initial release of GPT-4’s vision and audio upload features in September 2023. The API launch marks a significant milestone, as it makes OpenAI’s latest large language model with integrated computer vision available to a wider range of users and use cases, further expanding the possibilities for intelligent, multimodal AI applications across industries.

💡 Top AI Research Updates

1. Rho-1: Not All Tokens Are What You Need

Rho-1 is a novel language model that challenges the traditional approach of uniformly applying a next-token prediction loss to all training tokens. The paper’s key insight is that “not all tokens in a corpus are equally important for language model training.” By analyzing token-level training dynamics, the researchers introduce Selective Language Modeling (SLM), which selectively trains the model on useful tokens aligned with the desired distribution. This is achieved by scoring pretraining tokens using a reference model and then focusing the training loss on tokens with higher excess loss. When applied to the 15B OpenWebMath corpus, Rho-1 yielded an absolute improvement of up to 30% in few-shot accuracy across 9 math tasks. After fine-tuning, the Rho-1 models (1B and 7B) achieved state-of-the-art results on the MATH dataset, matching DeepSeekMath with only 3% of the pretraining tokens. Furthermore, Rho-1 demonstrated a 6.8% average enhancement across 15 diverse tasks when pretrained on 80B general tokens, showcasing improved efficiency and performance compared to traditional language model pretraining.

2. Leave No Context Behind: Efficient Infinite Context Transformers with Infini-attention

The paper introduces an efficient method to scale Transformer-based large language models to infinitely long inputs with bounded memory and computation. The key component is a new attention technique called Infini-attention, which incorporates a compressive memory into the vanilla attention mechanism and combines masked local attention and long-term linear attention in a single Transformer block. The authors demonstrate the effectiveness of their approach on long-context language modeling, passkey context retrieval, and book summarization tasks with large language models of 1 billion and 8 billion parameters. Their method enables fast streaming inference for LLMs by introducing minimal bounded memory parameters.

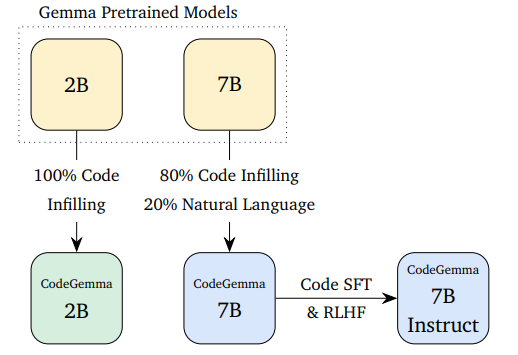

3. CodeGemma - an official Google release for code LLMs

CodeGemma is a family of open-access versions of Gemma, Google's large language model specialized in code, that have been released in collaboration with Hugging Face. The significance of CodeGemma lies in its ability to bridge the gap between natural language and code, with the models demonstrating strong performance on a variety of code-related tasks such as code completion, code generation, and code translation. The CodeGemma models come in three flavors - a 2B base model for code infilling and open-ended generation, a 7B base model trained on both code and natural language, and a 7B instruct model that users can chat with about code. These models have been integrated into the Hugging Face ecosystem, making them easily accessible to researchers and developers and allowing for further research and development in the field of natural language processing and code generation. The benchmark results for the CodeGemma models have been promising: On the HumanEval benchmark for evaluating code models on Python, the CodeGemma-7B model outperforms similarly-sized 7B models except for DeepSeek-Coder-7B; on the GSM8K benchmark, the CodeGemma-7B model performs best among the 7B models.

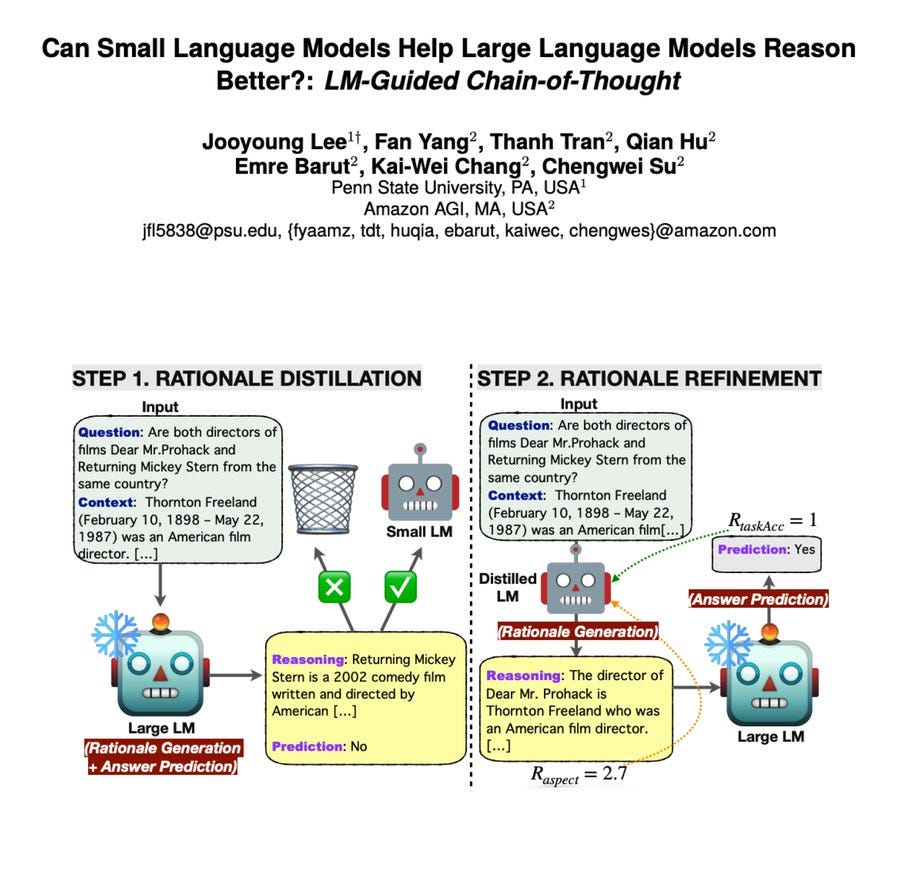

4. Can Small Language Models Help Large Language Models Reason Better?: LM-Guided Chain-of-Thought

The paper introduces an innovative framework called LM-Guided CoT that leverages a lightweight language model to guide a large language model in reasoning tasks. By first generating a rationale using the lightweight model and then prompting the large model with it, the approach outperforms all baselines in answer prediction accuracy. Interestingly, the authors found that reinforcement learning helps the model produce higher-quality rationales, leading to improved performance. This insight highlights the potential of using smaller models to enhance the reasoning capabilities of larger language models. Overall, the research demonstrates a clever way to combine the strengths of different-sized models, paving the way for more efficient and effective language AI systems.

📢 Community Announcements

Exciting news for our AI enthusiasts and professionals! We’re creating a comprehensive resource hub designed to cater to all your AI-related needs. Whether you’re just starting your journey in the fascinating world of artificial intelligence or you’re a seasoned expert looking to deepen your expertise, our upcoming platform will be your go-to destination.

What’s in Store?

AI Learning Resources: Dive into the AI space with our curated AI learning resources. We will be constantly updating with new content that we believe will benefit AI learners and enthusiasts!

Upcoming Events: Stay ahead of the curve with our curated list of AI events, from builder meetups to founder talks. Whether you prefer in-person gatherings or virtual meetups, there’s something for everyone.

Job Opportunities: Looking to kickstart your career in AI or eyeing your next big move? Our job board will feature a diverse range of openings from startups to big tech. This is your chance to join the forefront of AI innovation.

🙌 Follow Us

Join our expanding AI community! We're seeking enthusiasts like you to connect and collaborate. Dive into any of the channels below and become part of the conversation!

🌱 Join the Community

Our Slack community, tailored for AI enthusiasts like you, is live!

By joining, you'll benefit from:

🎙 Live Sessions with leading AI innovators - dive deep into discussions and expand your horizons.

📅 Exclusive Updates on offline events – be the first to know and get involved.

🤝 Network with a passionate community of AI builders - collaborate, learn, and grow with like-minded enthusiasts.

i know, that is why i started my blog and talked about it there:

https://danielterrero.com/blogs/grasping-the-concept-of-problem-solving