🧠 Your Weekly AI Dose #3

Hume AI's EVI, Amazon's Recent Anthropic Investment, Apple WWDC, and the Open-Source Alternative to Sora

Hi friends 👋,

Welcome to this week of our newsletter!

IntelliGen is an AI open house hosted by AI industry builders and CMU alumni.

Each week, we bring to you AI insights, product, and research updates, to keep you informed on the latest and greatest in AI.

Let’s get to it.

🧠 In Today’s Newsletter:

📢 Community Announcements

We are building an integrated resource hub for all things AI

Check out upcoming events and fresh job openings in the AI space!

⚡️ AI News You Should Know

OpenAI Reveals Audio Tool that Mimics Human Voice

Hume Releases Empathic Voice Interface (EVI) — The First Conversational AI with Emotional Intelligence

Amazon Invests $2.75 Billion in Anthropic

Meta Adds AI to Smart Glasses

Apple’s Worldwide Developers Conference is Set for June 10, 2024

💡 Research Updates

AIOS: LLM Agent Operating System

InterHandGen: Two-Hand Interaction Generation via Cascaded Reverse Diffusion

Mora: Enabling Generalist Video Generation via A Multi-Agent Framework

Arc2Face: A New Identity-Conditioned Face Foundation Model

⚡️ AI News You Should Know

1. OpenAI Reveals Audio Tool that Mimics Human Voice

OpenAI Reveals Audio Tool that Mimics Human Voice

OpenAI has developed a new AI-powered voice cloning technology called "Voice Engine" that can recreate a person's voice from a short 15-second audio clip. The technology allows for the generation of synthetic speech that closely mimics the original speaker's voice, even in different languages. While OpenAI has shared early access with a small group of developers, the company is proceeding cautiously with the wider release of the technology due to concerns about potential misuse, such as the creation of "deepfake" audio recordings. The advancement represents a significant step forward in AI-generated audio, but OpenAI is working to understand and address the risks before making the technology more widely available.

2. Hume Announces Empathic Voice Interface (EVI)

Hume's Empathic Voice Interface (EVI) is the world’s first emotionally intelligent voice AI.

Hume AI recently announced the release of its new flagship product, the Empathic Voice Interface (EVI), alongside a successful $50 million Series B funding round. EVI is the world’s first emotionally intelligent. This conversational voice-to-voice AI is designed to understand and respond to human emotions through vocal tones, aiming to provide more natural and satisfying communication experiences. EVI is powered by an empathic large language model (eLLM) and can be integrated into various applications with a single API call, promising applications across customer service, healthcare, and more.

3. Amazon Invests $2.75 Billion in Anthropic, Completing $4 Billion Investment

Amazon Invests $2.75 Billion More in AI Startup Anthropic

Amazon has completed its $4 billion investment in AI startup Anthropic (maker of Claude 3) by investing an additional $2.75 billion. The investment brings Amazon's total stake in Anthropic to $4 billion, and the company will maintain a minority stake in the San Francisco-based startup. Anthropic will use AWS as its primary cloud provider and Amazon's custom chips to build, train, and deploy AI models.

4. Meta Adds AI to its Ray-Ban Smart Glasses

Meta is adding AI to its Ray-Ban smart glasses next month

Meta is rolling out new AI features for its Ray-Ban smart glasses, including multimodal AI that allows the glasses to respond to queries based on what the user is looking at. The AI can perform tasks like translating text, identifying objects/animals/landmarks, and suggesting matching clothing items. However, the AI capabilities are still in an early testing phase and do not always work accurately or consistently, as the glasses struggled to identify some real-world objects and animals. Meta plans to continue refining the AI features over time as it gathers feedback from users, aiming to make the Ray-Ban smart glasses more useful and powerful through the integration of this new AI technology, though the current implementation still has room for improvement.

5. Apple’s Worldwide Developers Conference is Set for June 10, 2024

Apple’s Worldwide Developers Conference returns June 10, 2024

Apple’s WWDC 2024 is scheduled for June 10-14, and the upcoming conference is expected to showcase major updates across Apple's software platforms, including iOS 18, the next version of macOS, and further enhancements to the VisionOS operating system powering the Apple Vision Pro mixed reality headset. Rumors suggest Apple may also integrate more generative AI capabilities into its devices, potentially through a partnership with Google's Gemini AI model. While details are still scarce, the conference will likely provide an early look at Apple's software roadmap for the year ahead, as well as potentially tease new hardware developments. WWDC 2024 will once again be held in-person at Apple Park, with online viewing options available for developers unable to attend.

💡 Top AI Research Updates

1. AIOS: LLM Agent Operating System

Paper Link: https://arxiv.org/pdf/2308.07411.pdf; Github

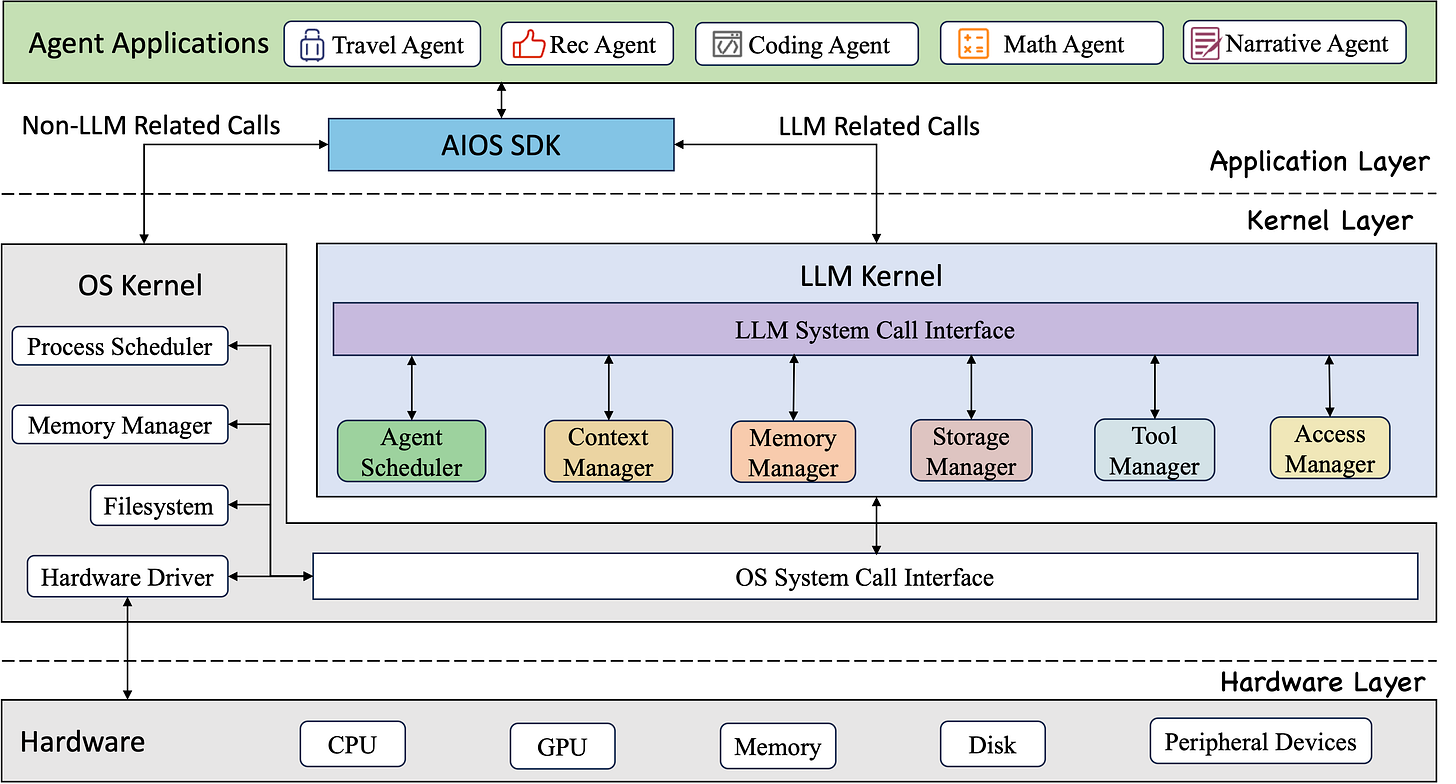

There exist challenges and inefficiencies in integrating and deploying LLM-based intelligent agents due to sub-optimal scheduling, difficulty in maintaining context, and complexities in integrating heterogeneous agents. To address these issues, the paper introduces AIOS, an operating system designed specifically for LLM agents, aiming to optimize resource allocation, facilitate context management, enable concurrent execution, provide tool services, and maintain access control for agents. The architecture of AIOS integrates LLM into the operating system to create a more efficient and capable platform for the development and deployment of LLM agents, showcasing improvements in performance through experiments. The system is open-source, with its code available for community contribution and use.

2. InterHandGen: Two-Hand Interaction Generation via Cascaded Reverse Diffusion

Paper link: https://arxiv.org/abs/2403.17422; Github

This paper introduces InterHandGen, a novel framework for generating plausible and diverse interactions of two hands either with or without objects. This framework tackles the complexity of modeling the joint distribution of two hands interacting in various scenarios by decomposing it into simpler, conditional, and unconditional single-hand distributions. Utilizing diffusion models, InterHandGen captures the intricate dynamics of two-hand interactions, enabling high-quality synthesis of hand poses and shapes. This capability is crucial for applications in augmented and virtual reality, and human-computer interaction, where understanding and replicating human hand movements are essential.

3. Mora: Enabling Generalist Video Generation via A Multi-Agent Framework

Paper link: https://arxiv.org/abs/2308.07968; Github

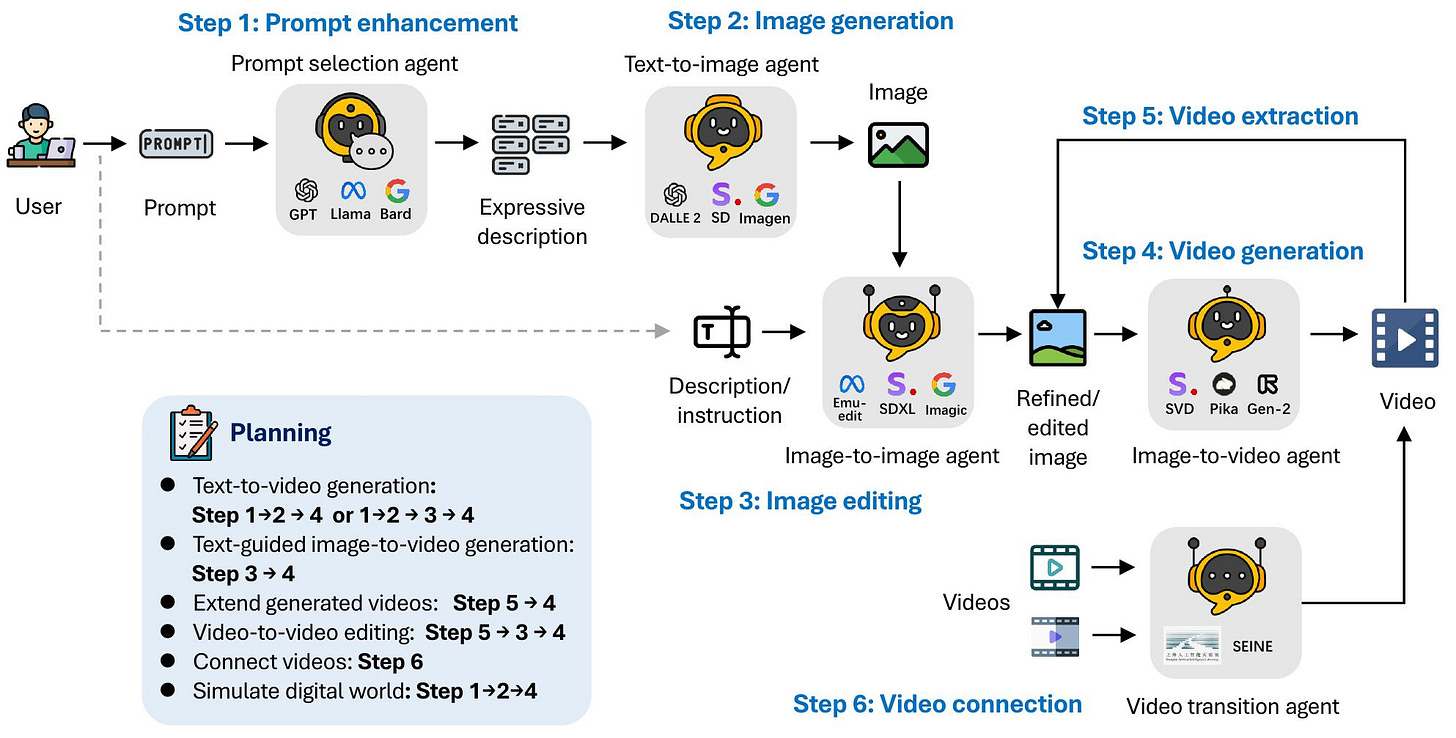

The paper introduces Mora, a novel multi-agent framework designed to bridge the gap in generalist video generation capabilities exposed by the launch of OpenAI's Sora. While Sora demonstrated unprecedented performance in video generation from text prompts, it remained largely inaccessible due to its closed-source nature. Mora addresses this by leveraging multiple advanced visual AI agents to mimic Sora’s functionality, enabling a range of video generation tasks such as text-to-video, image-to-video generation, video editing, and more. Despite a performance gap compared to Sora, Mora's open-source framework represents a significant step forward in collaborative AI agents for video generation. This project aims to guide future video generation efforts, offering a versatile and publicly available tool for the research community and beyond.

4. Arc2Face: A New Identity-Conditioned Face Foundation Model

Paper link: https://arxiv.org/abs/2308.09710; Github

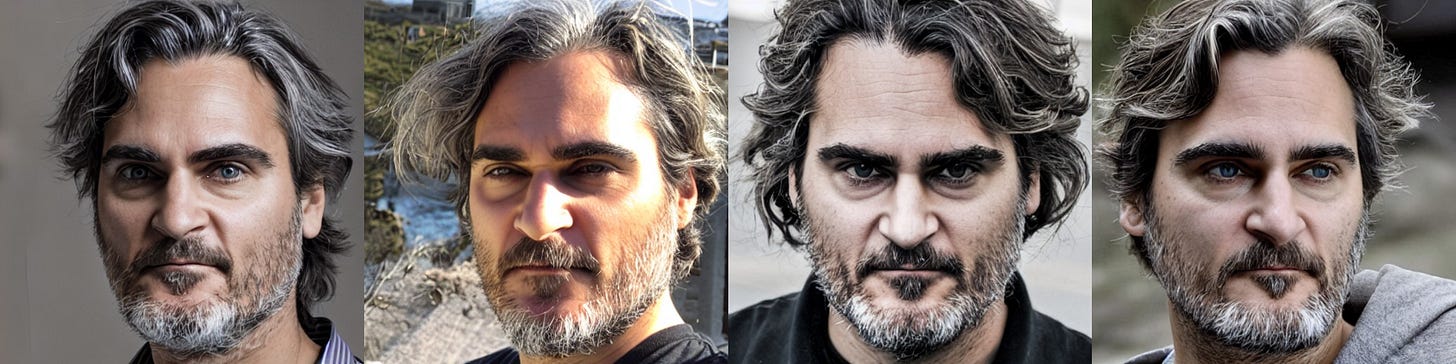

The paper introduces a foundation model called Arc2Face, designed for generating high-quality, photo-realistic facial images from identity embeddings (ArcFace embeddings). Unlike existing models that struggle with identity preservation when generating facial images, Arc2Face leverages a large dataset and a novel identity-conditioning technique to achieve superior face similarity and identity consistency in generated images. Arc2Face sets itself apart by focusing solely on identity vectors for generation, bypassing the need for text descriptions or manual prompt engineering, thus offering a more robust prior for tasks requiring consistent identity representation.

📢 Community Announcements

Exciting news for our AI enthusiasts and professionals! We’re creating a comprehensive resource hub designed to cater to all your AI-related needs. Whether you’re just starting your journey in the fascinating world of artificial intelligence or you’re a seasoned expert looking to deepen your expertise, our upcoming platform will be your go-to destination.

What’s in Store?

Upcoming Events: Stay ahead of the curve with our curated list of AI events, from builder meetups to founder talks. Whether you prefer in-person gatherings or virtual meetups, there's something for everyone.

Job Opportunities: Looking to kickstart your career in AI or eyeing your next big move? Our job board will feature a diverse range of openings from startups to big tech. This is your chance to join the forefront of AI innovation.

🙌 Follow Us

Join our expanding AI community! We're seeking enthusiasts like you to connect and collaborate. Dive into any of the channels below and become part of the conversation!

🌱 Join the Community

Our Slack community, tailored for AI enthusiasts like you, is live!

By joining, you'll benefit from:

🎙 Live Sessions with leading AI innovators - dive deep into discussions and expand your horizons.

📅 Exclusive Updates on offline events – be the first to know and get involved.

🤝 Network with a passionate community of AI builders - collaborate, learn, and grow with like-minded enthusiasts.